:no_upscale()/cdn.vox-cdn.com/uploads/chorus_image/image/73304940/TaylorOnTaylor_Getty_Ringer.0.jpg)

Entertainment

How Taylor Swift Writes About Being Taylor Swift

SNP’s power-sharing deal with the Scottish Greens collapses

World

By Mary McCool & Craig Williams BBC Scotland News 25 April 2024, 07:36 BST Updated 28 minutes ago To play this content, …

Garry’s Mod faces deluge of Nintendo-related DMCA takedown notices

Technology

Facepunch Studios has announced on Steam that it’s removing 20 years’ worth of Nintendo-related workshop items for its sandbox game Garry’s Mod …

Shoppers in their 50s say this vitamin C serum — on sale for $10 — helps fade signs of aging

LifeStyle

You’re double cleansing, moisturizing and wearing SPF, but do you have Vitamin C in your skincare routine? This super product works to …

Comcast Edges Wall Street Q1 Estimates; Peacock Reaches 34M Subscribers And Keeps Trimming Losses

Business

William Thomas Cain/Getty Images UPDATED with executive comments. Nearly four years since the launch of NBCUniversal streaming flagship Peacock, Comcast President Mike …

Sophia Bush Pens Essay on Experiencing ‘Real Joy’ After Coming Out

Entertainment

Sophia Bush is opening up like never before. Penning an essay as Glamour’s April 2024 cover star, Bush, 41, publicly came out …

Legal experts say the TikTok divest-or-ban bill could stand up in court despite being a free-speech disaster

Politics

President Joe Biden signed the TikTok divest-or-ban bill into law on Wednesday. TikTok said it would challenge the law in court, citing …

The Fantasy Baseball Numbers Do Lie: Still hope for Josh Hader and struggling Astros?

Sports

Josh Hader and the Astros have gotten off to a terrible start, but there’s still plenty of reason for optimism after a …

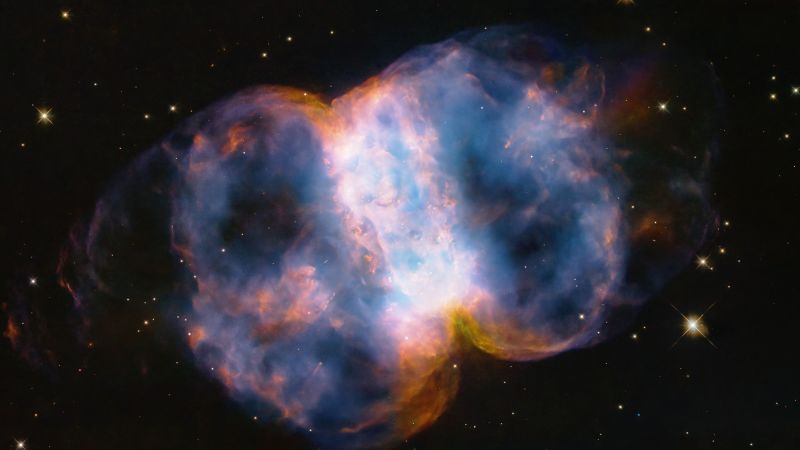

Hubble image may contain evidence of stellar cannibalism in dumbbell-shaped nebula

Science

Sign up for CNN’s Wonder Theory science newsletter. Explore the universe with news on fascinating discoveries, scientific advancements and more. CNN — The …

:max_bytes(150000):strip_icc():focal(749x0:751x2)/sophia-bush-glamour-4-tout-d8f1c60580974aa9ac1491fbeb7a9e6a.jpg)